How Governments Regulate Artificial Intelligence: Explained

Published: 9 Mar 2026

Artificial intelligence is growing fast, and governments around the world are trying to control how it works, how it collects data, and how companies use it. The goal is simple: protect people, reduce risks, and guide innovation in a safe direction.

So here is how governments regulate artificial intelligence in real life and what steps they follow to keep AI safe, fair, and accountable.

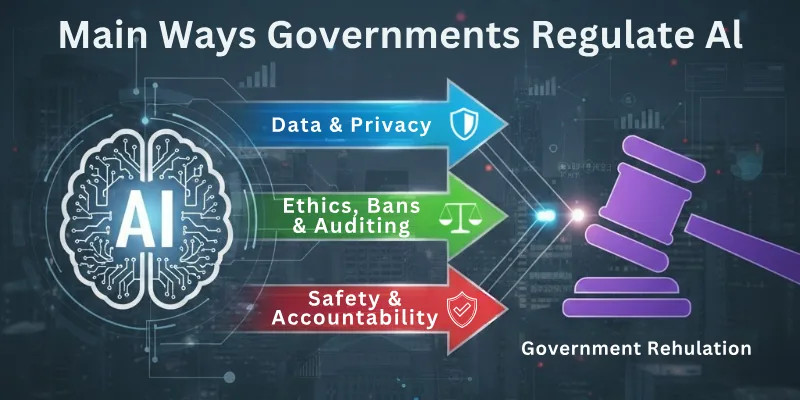

Main Ways Governments Regulate AI

Key roles of AI in government regulation:

- AI Data Privacy Laws

- Safety & Risk Management Frameworks

- AI Transparency & Explainability Rules

- Ethical AI Guidelines

- Laws for AI in Healthcare, Finance & Education

- Bans or Restrictions on Harmful AI Uses

- AI Accountability & Human Oversight

- National AI Strategies & Compliance Checks

- AI Testing, Auditing & Certification

- International AI Cooperation & Standards

Here is an explanation of all the regulation methods.

1. AI Data Privacy Laws

Governments create strict rules to control how AI collects, stores, and uses personal data. These laws ensure that users stay safe and understand how their information gets processed. They also force companies to use data responsibly and prevent misuse. Overall, it creates a safer digital environment for everyone.

What these rules include:

- Consent requirements

- Limits on data storage

- Rules for handling sensitive information

- Penalties for privacy violations

2. Safety & Risk Management Frameworks

Governments build frameworks that classify AI systems by risk levels: low, medium, high, or unacceptable. High-risk systems must follow strict rules to protect people from harm. These frameworks help identify AI dangers early and prevent unsafe technology from reaching the public.

Key focuses:

- Risk assessment

- Mandatory safety tests

- Monitoring system behavior

- Blocking harmful models

3. AI Transparency & Explainability Rules

Many countries require companies to explain how their AI makes decisions. This stops “black box” AI systems from affecting people blindly. Users gain the right to ask why AI made a certain prediction or decision. Transparency improves trust and avoids hidden bias.

Transparency tools include:

- Human-readable explanations

- Clear model documentation

- Open reporting of AI limitations

- Disclosure when AI interacts with users

4. Ethical AI Guidelines

Governments promote ethical values like fairness, safety, and non-discrimination. These guidelines help developers build AI that respects human rights and avoids harmful behavior. Ethical rules ensure that AI supports society instead of controlling it.

Ethical priorities:

- Fair treatment of all groups

- No biased decisions

- Respect for human dignity

- Equality in AI training data

5. Laws for AI in Healthcare, Finance & Education

AI used in important sectors faces stricter rules because small mistakes can cause major harm. Governments regulate how AI diagnoses patients, approves loans, and makes educational recommendations. This keeps people safe and prevents unfair decisions.

Sector regulations include:

- Medical AI approval tests

- Anti-discrimination rules in finance

- Fair grading and learning analytics

- Transparent decision paths

6. Bans or Restrictions on Harmful AI Uses

Some AI systems are completely banned due to their high risk. Governments block AI that invades privacy, manipulates people, or monitors them without consent. These bans protect basic human freedom.

Commonly restricted areas:

- Social scoring systems

- Deepfake misuse

- Mass surveillance

- Autonomous weapons

7. AI Accountability & Human Oversight

Many governments require a human supervisor for critical AI systems. If something goes wrong, a real person must take responsibility. Oversight ensures AI never replaces human judgment in sensitive decisions.

Oversight measures:

- Human review of AI outcomes

- Logs for tracking decisions

- Clear responsibility roles

- Error reporting requirements

8. National AI Strategies & Compliance Checks

Governments publish national strategies to guide AI growth, research, and innovation. These strategies set goals for safety, competitiveness, and public benefit. They also create compliance bodies that ensure companies follow the rules.

Strategy elements:

- Funding for safe AI

- Public awareness programs

- Safety guidelines

- Enforcement agencies

9. AI Testing, Auditing & Certification

Before launching AI systems, many countries require certification. This includes bias tests, safety checks, data audits, and performance reviews. Regular audits ensure the system remains reliable over time.

Auditing includes:

- Bias detection

- Security checks

- Data quality audits

- Error rate analysis

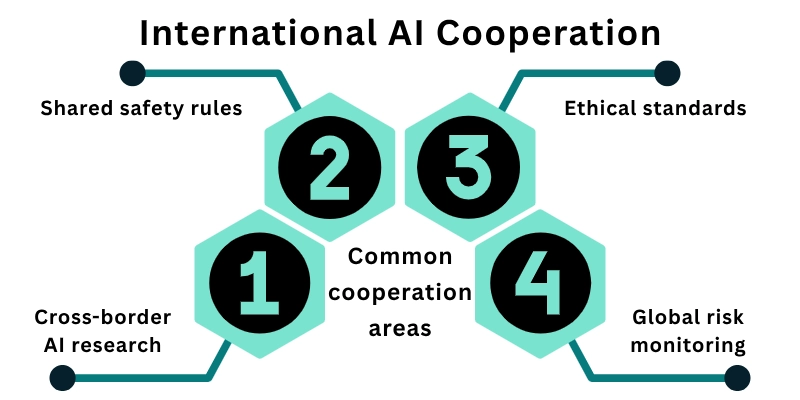

10. International AI Cooperation & Standards

AI affects the entire world, so governments collaborate and create shared international standards. These standards ensure that AI remains safe and fair across countries. This global cooperation reduces risks and encourages responsible innovation.

Common cooperation areas:

- Shared safety rules

- Cross-border AI research

- Ethical standards

- Global risk monitoring

Why Strong AI Regulation Is Becoming Essential

AI now influences healthcare, jobs, finance, security, and personal data. Without proper rules, it can create unfair decisions, privacy risks, and safety problems. Strong regulation helps protect society while still supporting innovation.

This is why countries are actively designing policies that explain how governments regulate artificial intelligence to keep technology safe, fair, and trustworthy.

Future of AI Governance

Governments will use more advanced systems to monitor high-risk AI, detect harmful models, and enforce global standards. Research shows that over 70% of countries plan to introduce new AI safety laws by 2026, showing how fast regulation is expanding. We will see stronger data protection rules, better AI auditing tools, and international agreements to control powerful AI models.

The future will demand more accountability, more transparency, and stricter protection for users as governments regulate artificial intelligence in deeper and smarter ways.

Final Note

In this guide, we covered how governments regulate artificial intelligence through privacy laws, transparency rules, audits, ethical guidelines, risk controls, and sector-specific regulations. You now understand exactly how countries protect users and guide safe AI development.

My personal recommendation: Start studying your own country’s AI laws first because every region has different rules. Focus on understanding data privacy, high-risk AI categories, and ethical requirements. These areas help you stay compliant and build safe, trustworthy AI systems.

Thank you for reading! To explore more insights and clear remaining questions, see the FAQs below on how governments regulate artificial intelligence.

FAQs: AI in Government Regulation

Here are some of the most commonly asked questions related to the role of AI in government regulation:

Governments create laws, ethical guidelines, and auditing requirements to control AI. They regulate data use, safety, and high-risk applications. This ensures AI works safely and fairly for everyone.

Privacy laws limit how AI collects, stores, and shares personal information. Governments force companies to get consent and handle data responsibly. This keeps user information safe and secure.

Yes, governments ban AI that invades privacy, spreads misinformation, or endangers public safety. These restrictions prevent misuse and protect human rights.

They require transparency and explainability, so AI decisions are clear and unbiased. Human oversight ensures no system makes harmful choices alone. Users can trust AI outcomes.

Healthcare, finance, education, and security face stricter rules. Governments check that AI does not make wrong or discriminatory decisions. This keeps essential services safe for everyone.

Agencies audit AI systems, review data, and check for bias. Companies must certify AI performance before launching it publicly. Regular monitoring keeps AI accountable over time.

AI crosses borders, so countries share safety standards and ethical rules. Cooperation prevents risks from harmful AI and encourages responsible global development.

- Be Respectful

- Stay Relevant

- Stay Positive

- True Feedback

- Encourage Discussion

- Avoid Spamming

- No Fake News

- Don't Copy-Paste

- No Personal Attacks

- Be Respectful

- Stay Relevant

- Stay Positive

- True Feedback

- Encourage Discussion

- Avoid Spamming

- No Fake News

- Don't Copy-Paste

- No Personal Attacks